AI Weekly Trends Highly Opinionated Signals from the Week [CY26W8]

🔗 Learn more about me, my work, and how to connect: maeste.it – personal bio, projects, and social links.

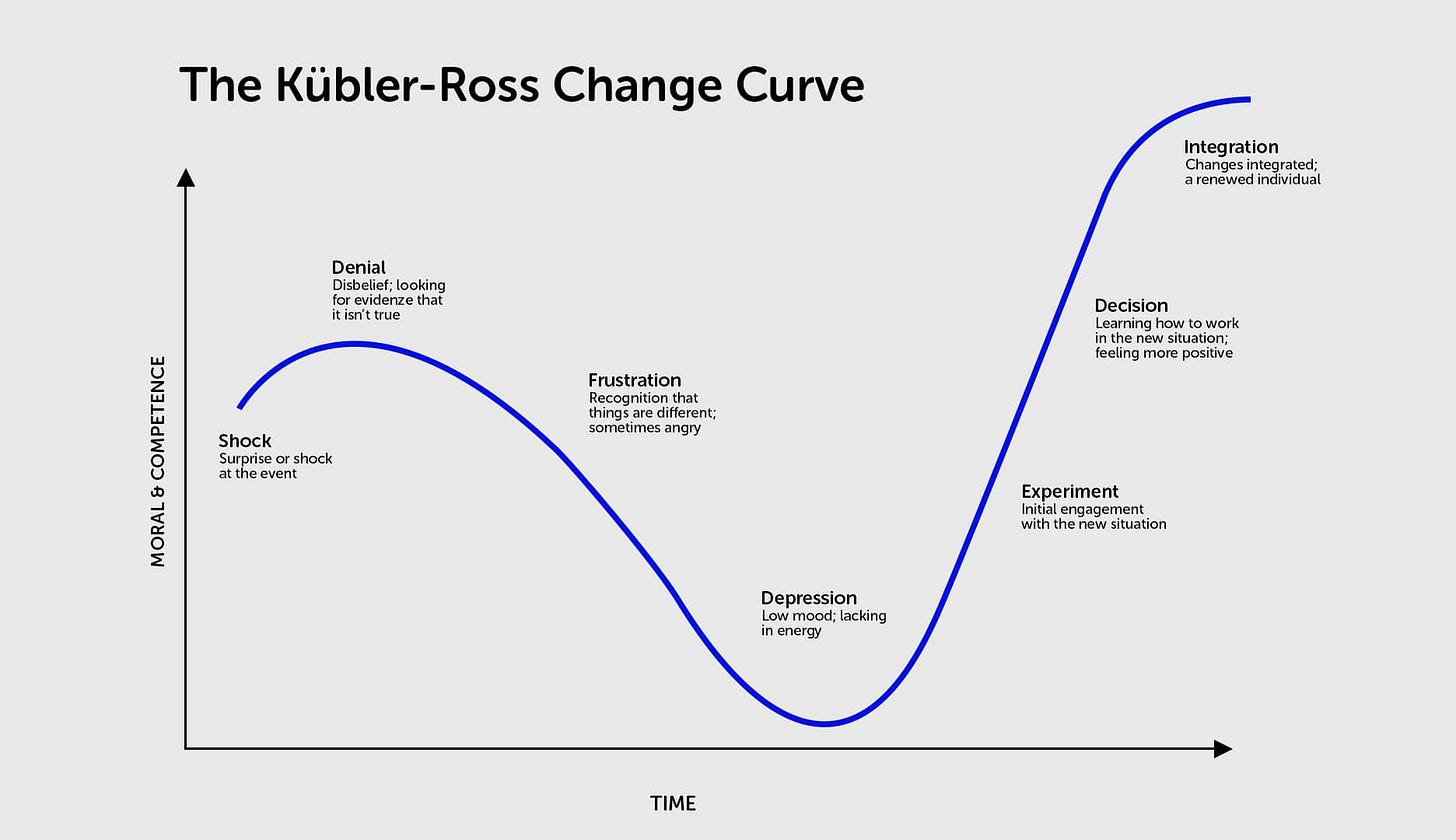

This week I want to start with what I’ve been observing lately on social media. Calling it out probably won’t make me new friends, but I believe what’s happening in the world of software engineers (and related fields) is quite evident. There was a first phase, which I’d place roughly until Christmas, where the majority of software “insiders” were posting things like: “AI can’t replace a good engineer, it makes too many errors,” “Show me something that isn’t just a demo in a PR,” “Come on, AI can only write boilerplate code.” Pure denial. Now we’re in a second phase with posts like: “If AI gives us more speed, can someone explain why I’m working twice as hard,” “AI isn’t taking our jobs, it’s actually making them messier,” “We all need to become software architects, we were supposed to work less but they’re asking even more of us.” Take a look at the well-known Kübler-Ross change acceptance curve I’m showing below... and brace yourselves for the depression phase...

Before I let you dive into the news and my analysis of what happened this week, let me share what’s happened, is about to happen, or will happen in my public agenda, for those who’d like to follow my talks or meet me in person (I love exchanging ideas with anyone willing to do so):

Podcast with Alessio and Paolo:

Wednesday we released an interview with Daniele Zonca on AI in the enterprise world

Saturday an old friend, Antonello Mantuano, came by for an interesting chat

On March 12th we’ll be at JUG Milano to record our first live episode. Don’t miss it

We’re working on more interviews and episodes with very interesting guests

Solo

I was interviewed again on the opensource podcast. This time I talk about agents, AI, AGI. It drops here on the 26th. Listen to it and let me have your feedback

On February 26th I’ll be at this event in Milan (as an attendee, but happy to exchange ideas). Beyond my presence, the event itself is worth following

On March 24th I’ll be at Voxxed Day in Zurich. Alessio and I are presenting a talk on AI assisted coding

On May 30th I’ll have the honor of being one of the PyCon Italia speakers

🤖 AI Models News and Research

Takeaways for AI Engineers

Takeaway 1: Sonnet 4.6 costs less per token than Opus 4.5 but uses ~2.5x more: smaller models compensate with more reasoning, and the real price/performance comparison is less linear than it seems.

Takeaway 2: Google’s entry with Lyria 3 into generative music confirms this market is already concrete and consolidating, following Suno and other pioneers.

Takeaway 3: Model competition is global and multimodal: Gemini 3.1 Pro (reasoning), Qwen 3.5 (vision, 201 languages), PersonaPlex (real-time voice) — the frontier advances on multiple axes simultaneously.

Action Items:

Compare the real TCO of Sonnet 4.6 vs Opus 4.5 in your use cases, considering total token consumption.

Experiment with PersonaPlex for voice interaction with coding agents on Linux/open source.

What’s happening this week?

Another new model in Google’s Gemini family: with Lyria 3, Big Blue enters the AI-generated music market too, and does so in grand style and with great power as usual. And it’s far from a minor market — since the early deals with Suno and other platforms, it’s already moving a lot of money. The same will probably happen with video productions, but AI-generated music is already a reality now.

But the most relevant release in the Gemini household is Gemini Pro 3.1, which shouldn’t be confused with Gemini 3.0 Deep Think from last week: it’s a new version of the model for all subscribers. And it significantly improves results across all benchmarks, most notably a doubled score on ARC-AGI-2.

Anthropic also announces an important release with Sonnet 4.6 which, according to Anthropic’s benchmarks and internal reports, matches — or nearly matches — Opus 4.5 at a much lower per-token price. But to do so it uses many more tokens (estimated 2.5x), so on one hand the total cost is indeed lower than Opus 4.5 but not dramatically so, and on the other hand it suggests that smaller SOTA models achieve higher performance with more reasoning. Not unexpected, but an interesting indirect confirmation.

The Chinese are far from standing still either, with Qwen publishing version 3.5 of their flagship model. Natively multimodal, with an interesting hybrid architecture and support for a remarkable 201 languages. Also interesting is DuckDuckGo’s entry into the AI market, bringing their privacy policy to image generation as well.

I also want to highlight with great interest NVIDIA’s conversational speech-to-speech model. I’ll spend a few hours on it soon to see if it can help bring voice interaction with agents (coding and otherwise) to the open source world (Linux in particular), which is very dear to me.

Links of the week

Gemini 3.1 Pro — Google releases Gemini 3.1 Pro with doubled ARC-AGI-2 score, significant advancement in abstract reasoning.

DuckDuckGo AI Image Editing — Privacy-first AI image editing on Duck.ai, no account required and no data storage.

Gemini Lyria 3 — AI music generation integrated into the Gemini app: 30-second tracks from text or images.

Claude Sonnet 4.6 — Anthropic releases Sonnet 4.6 with 1M token context window, preferred over Opus 4.5 in 59% of cases.

Qwen3.5 — Vision-language model with 397B parameters (17B active), hybrid architecture and support for 201 languages.

PersonaPlex — NVIDIA real-time full-duplex speech-to-speech model for interactive voice conversations.

🕸️ Agentic AI

Takeaways for AI Engineers

Takeaway 1: AI agent security is an architectural problem, not solvable with prompt-based safeguards alone: sandboxing, granular permissions, and dedicated logging are needed.

Takeaway 2: Harness engineering is emerging as an evolution of prompt and context engineering: changing data, tools, MCP, and skills can have more impact on agent performance than changing the model itself.

Takeaway 3: Agent autonomy is growing steadily and expert users are shifting from approving individual actions to strategic monitoring — a paradigm shift in human-agent interaction.

Action Items:

Review the security architecture of your agents: verify sandboxing, permission scope, and logging of autonomous actions.

Experiment with harness engineering: modify your agents’ tools, data, and context before changing the model to improve results.

What’s happening this week?

Let’s start with something we discussed last week. The creator of OpenClaw has decided to join OpenAI. I’m confident that with the company’s support, he’ll bring more disruptive ideas to this young market. I strongly hope his work can continue in the community as well, because one of the most interesting aspects of OpenClaw was bringing Open Source back to the center of the debate.

Two main themes I want to highlight with the links in this section. The first concerns a growing attention to agent security, with architectural problems to address and best practices to explain and instill in developers and users. Security has always been a topic as central as it is neglected, and with generative AI it becomes even more important to understand the risks and how to mitigate them.

The second theme is measuring agent results and autonomy, from OpenAI and Anthropic benchmarks to those showing how changing the harness (i.e., everything used to extend an LLM: data, tools, MCP, skills, etc.) can change agent results. Keep the word harness in mind, because harness engineering is increasingly being discussed as an evolution of context engineering and prompt engineering.

Links of the week

Secure Sandboxing for Local Agents — Cursor reduces agent interruptions by 40% with native per-platform sandboxing (Seatbelt, Landlock, WSL2).

Harness Engineering for Deep Agents — LangChain’s coding agent jumped from Top 30 to Top 5 on Terminal Bench 2.0 with a single harness change.

EVMbench — OpenAI benchmark for evaluating AI agents on smart contract vulnerabilities, both defensive and offensive use.

OpenClaw creator joins OpenAI — OpenClaw’s creator joins OpenAI; the project remains open and independent within a new foundation.

The problem isn’t OpenClaw. It’s the architecture. — OpenClaw’s vulnerabilities reveal structural risks in agent ecosystems: sandboxing and restricted permissions are needed.

Measuring AI Agent Autonomy — Anthropic analyzes millions of human-agent interactions: growing autonomy, users shifting toward strategic monitoring.

💻 AI Assisted Coding

Takeaways for AI Engineers

Takeaway 1: Open source in the era of AI agents is transforming: the value is no longer just in code written, but in the ability to translate ideas into software — and AI amplifies this capability.

Takeaway 2: Configuring coding agents (CLAUDE.md, skills, workflows) is becoming a key competency: well-structured files and the “less is more” principle make the difference in output quality.

Action Items:

Review and optimize your CLAUDE.md (or AGENT.md) following the “less is more” principle: fewer instructions, more targeted.

Explore Anthropic’s official guide on skills to create reusable workflows in your coding agents.

What’s happening this week?

Let’s start with an article from one of the founders of Hugging Face on how open source is changing in the era of agentic engineering. And it’s changing from so many perspectives that it’s worth exploring in the article. I’ll just leave you with a reflection I also shared on X about how much developer ego used to matter. But on the other hand, I think open source developers (and their ego) manifest more in putting ideas into code — sometimes brilliant ones — and AI can only help with that.

Other noteworthy articles for those doing AI Engineering this week come from Anthropic with their guide to creating skills (not a brand new article, but I believe I’ve never flagged it) and an interesting article on how to better write your CLAUDE.md (or AGENT.md) files.

Take a look at the other articles too, especially if you want to make the most of your OpenRouter subscription or experiment with next-generation vector databases.

Links of the week

How Open Source Changes in the Agentic Coding Era — Reflection on the transformation of open source when AI agents autonomously contribute to code.

ZVEC — Alibaba’s in-process vector database, lightweight and fast, for similarity searches without external dependencies.

Free Models Router — OpenRouter meta-router that randomly selects among free models, useful for prototyping and testing.

Complete Guide to Building Skills for Claude — Anthropic’s official guide to creating reusable skills for Claude agents, “tiny CLI” pattern.

CLAUDE.md Masterclass — Complete guide on optimizing CLAUDE.md files for Claude Code, “less is more” principle.

🏢 Business and Society

Takeaways for AI Engineers

Takeaway 1: AI as an exoskeleton doesn’t just enhance — it enables previously unthinkable work. The metaphor goes beyond simple amplification and redefines what’s possible.

Takeaway 2: GPT-5.2 deriving an original result in theoretical physics is a concrete signal: AI is already generating new scientific knowledge, regardless of AGI timelines.

Takeaway 3: Choosing AI today is no longer about choosing a model: Mollick’s framework (Models, Apps, Harness) helps navigate an increasingly layered ecosystem.

Action Items:

Read Mollick’s article and evaluate which layer (Models, Apps, Harness) has the most impact on your current workflow.

Reflect on how AI is transforming your work: are you just enhancing existing tasks or enabling previously unthinkable activities?

What’s happening this week?

After last week’s strong stance on AGI, its timelines and its impacts, in this section I deliberately sought to bring different perspectives and reflections on what could be opposing viewpoints. So here you’ll find links about significant advances in using AI for scientific research (theoretical physics), but also those who theorize that AGI is distant. The viewpoint I prefer is that of those who compare it to an exoskeleton, a way to enhance those who use it, although even on this there would need to be a very clear distinction about what exactly is meant to avoid being misunderstood. My vision is precisely that of the exoskeleton that not only enhances but enables previously unthinkable work or completely transforms the perception of those who use it.

Also interesting is Ethan Mollick’s article to help choose the right tools in this transformative moment.

Links of the week

A Guide to Which AI to Use in the Agentic Era — Ethan Mollick proposes a 3-layer framework (Models, Apps, Harness) for navigating AI tool selection.

Why I don’t think AGI is imminent — Current Transformers have fundamental architectural limitations; AGI will likely require radically different approaches.

GPT-5.2 Derives a New Result in Theoretical Physics — GPT-5.2 Pro proposes a new formula for gluon scattering amplitudes, verified by physicists from IAS, Harvard, and Cambridge.

Stop Thinking of AI as a Coworker. It’s an Exoskeleton — AI as an amplifier of human capabilities, not a replacement: the exoskeleton model is more realistic and productive.

ByteDance Building Out AI Team in US — ByteDance hiring nearly 100 employees for its AI division in the US, spanning research and content generation.

🔗 Learn more about me, my work, and how to connect: maeste.it – personal bio, projects, and social links.

The Kübler-Ross framing is smart, but there is a timing issue. Electrification took 30 years to show up in manufacturing productivity statistics after widespread installation. We are 3 years into enterprise AI adoption. The negotiation-to-depression transition on the curve is actually where productivity accelerates underneath the surface. We can't see it yet because most organisations have bolted AI onto old workflows rather than redesigned